Future Tech

Unleashing the Beast: A Deep Dive Review of the iMac Pro i7 4K

Introduction In today’s computing landscape where many all-in-one computers prioritize style over substance, the iMac Pro i7 4K emerges as...

Read moreUnmasking the Phantom Costs: Your Guide to Erasing Invisible Financial Leaks

Introduction The invisible drain on your bottom line Every thriving business tackles obvious expenses. What quietly weakens the balance sheet...

Read moreThe Next Level: How Game Theory and Competitive AI Are Reshaping Entertainment

Introduction From play to strategy: why it matters now Entertainment has entered a strategic era. Game theory and competitive AI...

Read moreStealth in the Digital Shadows: The Rise of Residential Proxies

Discover how residential proxies enhance your online privacy and security, masking your IP address and protecting your digital footprint.

Read moreData Meets the Court: How Technology Is Transforming Volleyball Betting and Predictions

Explore how technology revolutionizes volleyball betting, enabling accurate predictions and informed decisions. Discover the future of sports wagering!

Read moreThe Most Common Mistakes to Avoid with a New Crypto Wallet

Master secure cryptocurrency storage and avoid costly mistakes. Learn essential tips for managing your digital assets and protecting your investments.

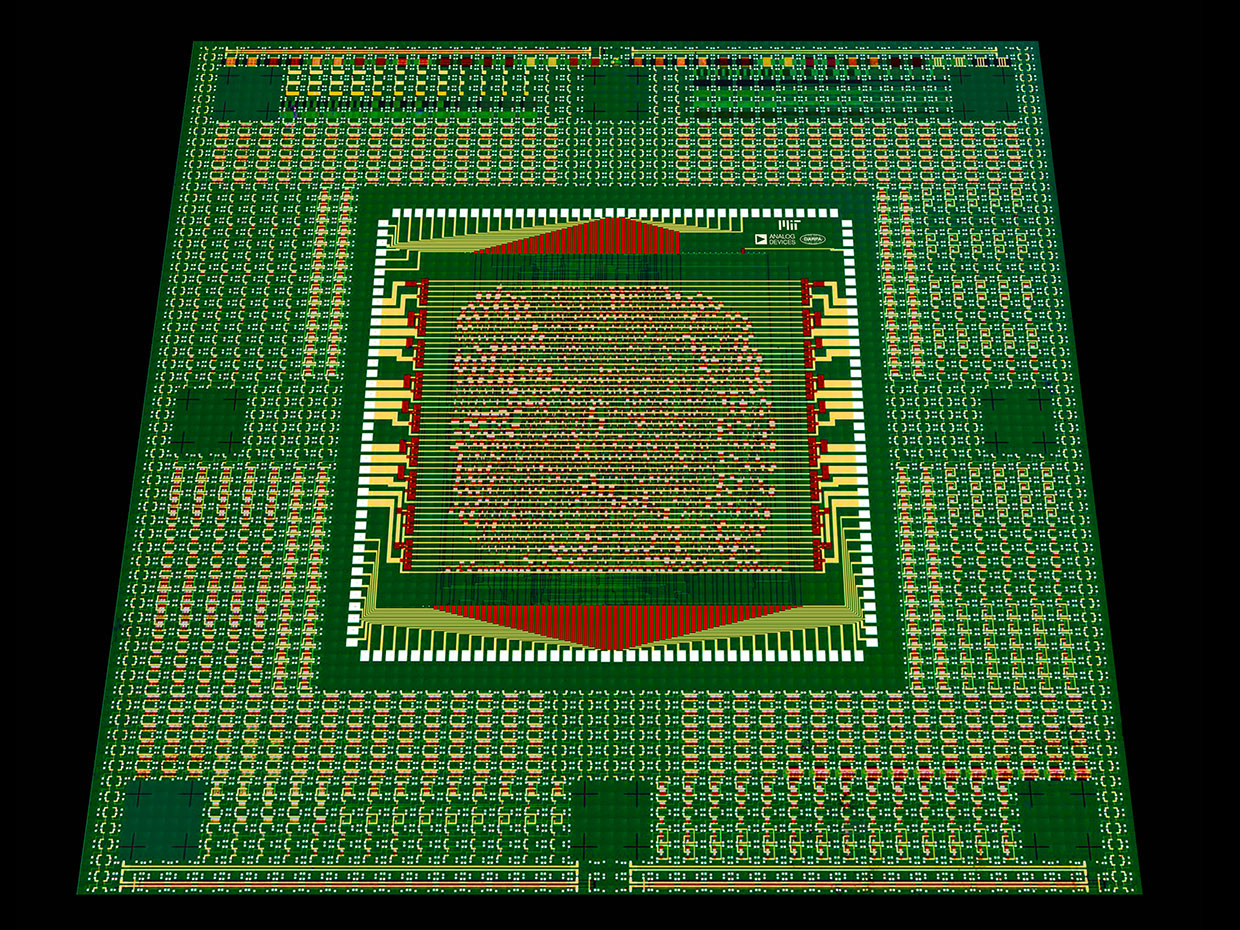

Read moreCarbon Nanotube Processors Hit Major Milestone: First Commercial Chip Success

Discover the breakthrough in computer chip technology using carbon nanotubes. Learn how this innovation achieves superior performance and paves the...

Read moreThe Future of Travel in Japan: Trends Shaping the Industry

Japan has always been a dream destination, blending ancient traditions with cutting-edge technology. From neon-lit cityscapes to serene temples, it...

Read moreHow Artificial Intelligence is Personalising Casino Bonus Offers

Many of the iGaming industry’s most successful operators leverage a range of highly sophisticated AI-powered tools and applications to enhance...

Read moreWhat Effect do Cryptocurrencies Have on The Gaming Industry?

Crypto is having a huge impact on the gaming industry. While it hasn’t gripped quite as hard as the more...

Read more